Tokenlimits

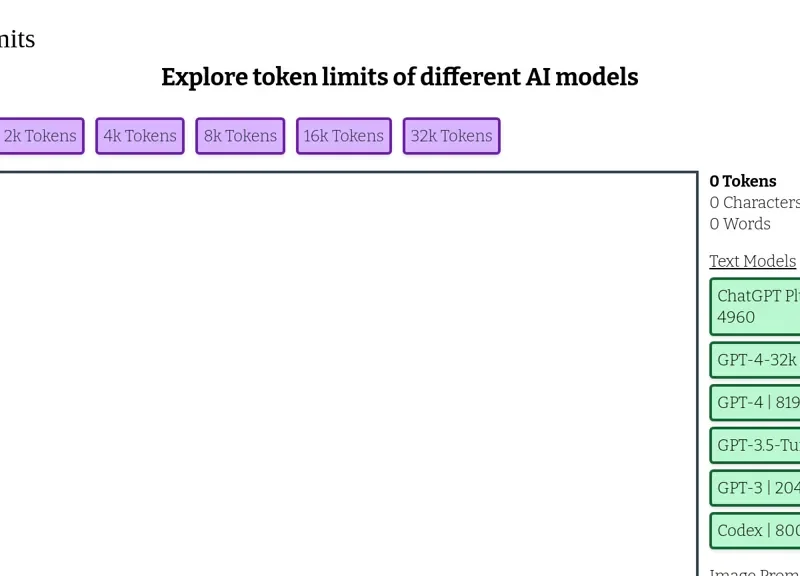

Tokenlimits is a tool designed for users exploring the token limits associated with various AI models. It provides a comprehensive overview of different token thresholds, ranging from 1k to 32k tokens for models like GPT-4 and GPT-3.5-turbo. This resource is particularly useful for developers and researchers who need to determine the maximum input capacity for their projects, ensuring they optimize their use of language and image prompt models effectively.