Exllama

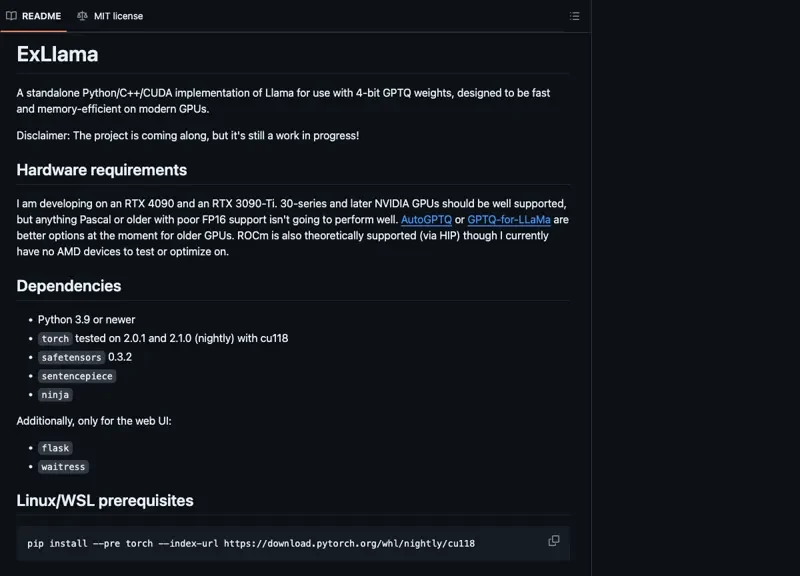

exllama is a memory-efficient implementation designed for leveraging Hugging Face transformers with the LLaMA model using quantized weights. It focuses on enabling high-performance natural language processing tasks while minimizing memory consumption, making it suitable for modern GPUs, including NVIDIA’s RTX series.

Key features include support for sharded models, configurable processor affinity for optimal performance, and flexible stop conditions for content generation. This tool is beneficial for developers and researchers looking to deploy robust AI models without the overhead typically associated with large transformer architectures.