Confident AI

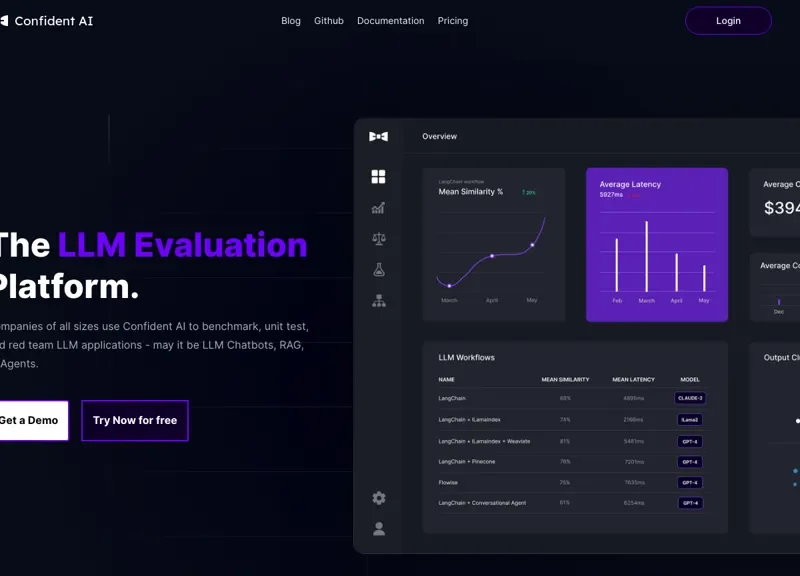

Confident AI is an evaluation platform designed for assessing large language models (LLMs). It enables companies to benchmark and unit test LLM applications, including chatbots and retrieval-augmented generation (RAG) systems. The platform allows easy generation, management, and sharing of evaluation datasets and test cases, centralizing testing processes to enhance efficiency.

With over 12 custom metrics and automatic regression tracking, users can ensure LLMs operate as expected. The tool facilitates A/B testing to identify optimal configurations and offers detailed monitoring to streamline workflows, thereby saving significant time for development teams.